Prompt to Video

Tree People(2023)

Stable Diffusion, Controlnet Openpose, Deforum, ChatGPT, Automatic1111 and After Effects

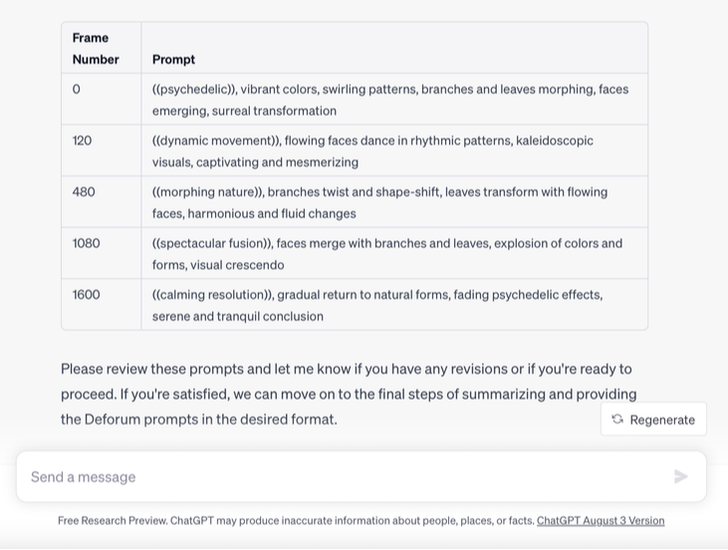

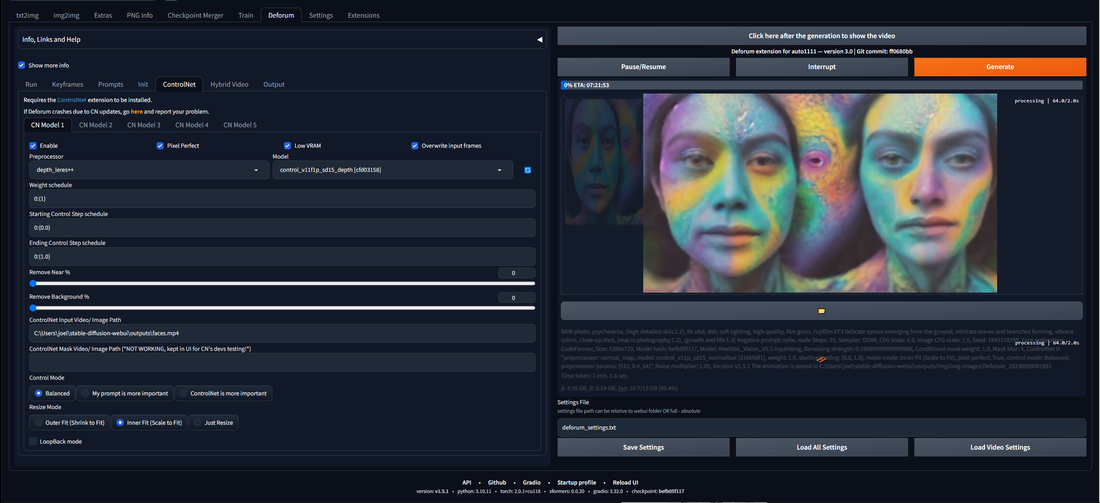

An experimental video using Automatic1111 and the Deforum extension. Deforum allows the user to input a series of prompts, which will be used to generate images that the system will blend between. I also found a script that when ran in chatGPT, will automate the prompt creation process. I use a modified version of a chatGPT script by Hash Milhan, which can be found here: Github Deforum Story Prompt Gen. In the next image you'll see the results of the script running in chatGPT, followed by the json code produced from this process.

Stable Diffusion, Controlnet Openpose, Deforum, ChatGPT, Automatic1111 and After Effects

An experimental video using Automatic1111 and the Deforum extension. Deforum allows the user to input a series of prompts, which will be used to generate images that the system will blend between. I also found a script that when ran in chatGPT, will automate the prompt creation process. I use a modified version of a chatGPT script by Hash Milhan, which can be found here: Github Deforum Story Prompt Gen. In the next image you'll see the results of the script running in chatGPT, followed by the json code produced from this process.

Prompt created from chatGPT script:

{

"0": "((psychedelic)), vibrant colors, swirling patterns, branches and leaves morphing, faces emerging, surreal transformation",

"120": "((dynamic movement)), flowing faces dance in rhythmic patterns, kaleidoscopic visuals, captivating and mesmerizing",

"480": "((morphing nature)), branches twist and shape-shift, leaves transform with flowing faces, harmonious and fluid changes",

"1080": "((spectacular fusion)), faces merge with branches and leaves, explosion of colors and forms, visual crescendo",

"1600": "((calming resolution)), gradual return to natural forms, fading psychedelic effects, serene and tranquil conclusion"

}

{

"0": "((psychedelic)), vibrant colors, swirling patterns, branches and leaves morphing, faces emerging, surreal transformation",

"120": "((dynamic movement)), flowing faces dance in rhythmic patterns, kaleidoscopic visuals, captivating and mesmerizing",

"480": "((morphing nature)), branches twist and shape-shift, leaves transform with flowing faces, harmonious and fluid changes",

"1080": "((spectacular fusion)), faces merge with branches and leaves, explosion of colors and forms, visual crescendo",

"1600": "((calming resolution)), gradual return to natural forms, fading psychedelic effects, serene and tranquil conclusion"

}

Next the json prompt is copied into Deforum, where it is turned into a series of images and stitched together. The video that is produced is then fed back into Deforum as a base for a more specific series of alterations. In this case, I wrote a prompt that created more organic, plant like images, using the the psychedelic face video as the Controlnet input video and the Optical Flow init frames. Further processing was done using the FILM interpolation algorithm. A third processing layer was done using the Prompt Travel Post Processing Pipeline GUI by Kahsolt which can be found here: Prompt Travel Post Processing Pipeline

AI War Photography

Operation Achilles (2023)

ChatGPT, Midjourney, Photoshop Generative Fill

As a young man, I was part of the International Security Assistance Force (ISAF) in Afghanistan, where I served as an Airborne Infantryman with the 82nd Airborne. One of the most famous campaigns I was part of was Operation Achilles. Working with an international coalition, our unit surrounded and captured the Taliban stronghold of Musa Qala in the Helmand Province. The battle was long and intense, involving a helicopter insertion, clearing of compounds, and firefights throughout. I hardly had time to process anything in the moment, let alone take pictures. This project is my attempt at processing and understanding what went on all those years ago. The images started as a prompt generated in ChatGPT, which was fed into midjourney to do the image generation. Cleanup work was done in Photoshop, where I used generative fill to fix minor rendering errors created in Midjourney.

ChatGPT, Midjourney, Photoshop Generative Fill

As a young man, I was part of the International Security Assistance Force (ISAF) in Afghanistan, where I served as an Airborne Infantryman with the 82nd Airborne. One of the most famous campaigns I was part of was Operation Achilles. Working with an international coalition, our unit surrounded and captured the Taliban stronghold of Musa Qala in the Helmand Province. The battle was long and intense, involving a helicopter insertion, clearing of compounds, and firefights throughout. I hardly had time to process anything in the moment, let alone take pictures. This project is my attempt at processing and understanding what went on all those years ago. The images started as a prompt generated in ChatGPT, which was fed into midjourney to do the image generation. Cleanup work was done in Photoshop, where I used generative fill to fix minor rendering errors created in Midjourney.

ChatGPT prompt:

Describe a battle from operation Achilles in Afghanistan

ChatGPT response used as starting prompt in Midjourney:

The battle started with a massive barrage of artillery and airstrikes, targeting Taliban positions and infrastructure. The thunderous sound of explosions echoed through the surrounding valleys as coalition forces unleashed their firepower upon the enemy's defenses. This initial assault was intended to weaken the Taliban's resolve and degrade their defensive capabilities. Once the bombardment ceased, ground forces moved forward, traversing the treacherous terrain under the watchful eye of attack helicopters circling above. The soldiers cautiously advanced, clearing compounds, engaging in close-quarter combat, and methodically neutralizing enemy fighters. The Taliban responded with a fierce resistance, utilizing a combination of small arms fire, rocket-propelled grenades (RPGs), and improvised explosive devices (IEDs). The battle intensified as the insurgents employed guerrilla tactics, taking advantage of their knowledge of the local terrain and the civilian population. Coalition troops had to exercise caution to avoid civilian casualties and ensure the safety of non-combatants caught in the crossfire.

Describe a battle from operation Achilles in Afghanistan

ChatGPT response used as starting prompt in Midjourney:

The battle started with a massive barrage of artillery and airstrikes, targeting Taliban positions and infrastructure. The thunderous sound of explosions echoed through the surrounding valleys as coalition forces unleashed their firepower upon the enemy's defenses. This initial assault was intended to weaken the Taliban's resolve and degrade their defensive capabilities. Once the bombardment ceased, ground forces moved forward, traversing the treacherous terrain under the watchful eye of attack helicopters circling above. The soldiers cautiously advanced, clearing compounds, engaging in close-quarter combat, and methodically neutralizing enemy fighters. The Taliban responded with a fierce resistance, utilizing a combination of small arms fire, rocket-propelled grenades (RPGs), and improvised explosive devices (IEDs). The battle intensified as the insurgents employed guerrilla tactics, taking advantage of their knowledge of the local terrain and the civilian population. Coalition troops had to exercise caution to avoid civilian casualties and ensure the safety of non-combatants caught in the crossfire.

Drawings to AI

Abstract (2022)

Micron Pens and Midjourney

Using my own abstract sketch as an input, I was able to generate a series of colorful abstract images based on the original style. I've found that abstract art works particularly well when brought into AI because of the chaotic nature of both. The AI takes over where the drawing ends, expanding those forms and patterns and brining new color and life to the image.

Micron Pens and Midjourney

Using my own abstract sketch as an input, I was able to generate a series of colorful abstract images based on the original style. I've found that abstract art works particularly well when brought into AI because of the chaotic nature of both. The AI takes over where the drawing ends, expanding those forms and patterns and brining new color and life to the image.

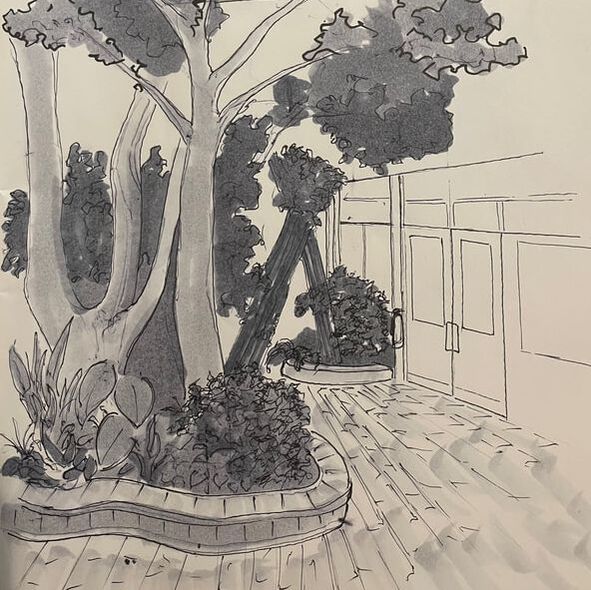

Botanical Gardens (2023)

Microns Pens, Copic Markers and Midjourney

I used a rough sketch, drawn at the Botanical Gardens in Buffalo, to generate a new AI generated image. I find this technique useful for the proof of concept/ prototyping phase. This allows me to get an idea out of my head and to the art team for production much, much faster than traditional methods.

Microns Pens, Copic Markers and Midjourney

I used a rough sketch, drawn at the Botanical Gardens in Buffalo, to generate a new AI generated image. I find this technique useful for the proof of concept/ prototyping phase. This allows me to get an idea out of my head and to the art team for production much, much faster than traditional methods.

Live Action Conversion

Legal Disclaimer

The content in the following section is a personal endeavor and the artwork featured herein is based on pre-existing works that I do not own the rights to. The purpose of this project is purely creative and non-commercial, and it is not intended to infringe upon the rights of the original creators or claim ownership of their work. All credit and recognition for the original work belong to their respective owners. If you are the rightful owner of any of the original works and have concerns regarding their use in this project, please contact me, and I will take immediate action to address your concerns.

The content in the following section is a personal endeavor and the artwork featured herein is based on pre-existing works that I do not own the rights to. The purpose of this project is purely creative and non-commercial, and it is not intended to infringe upon the rights of the original creators or claim ownership of their work. All credit and recognition for the original work belong to their respective owners. If you are the rightful owner of any of the original works and have concerns regarding their use in this project, please contact me, and I will take immediate action to address your concerns.

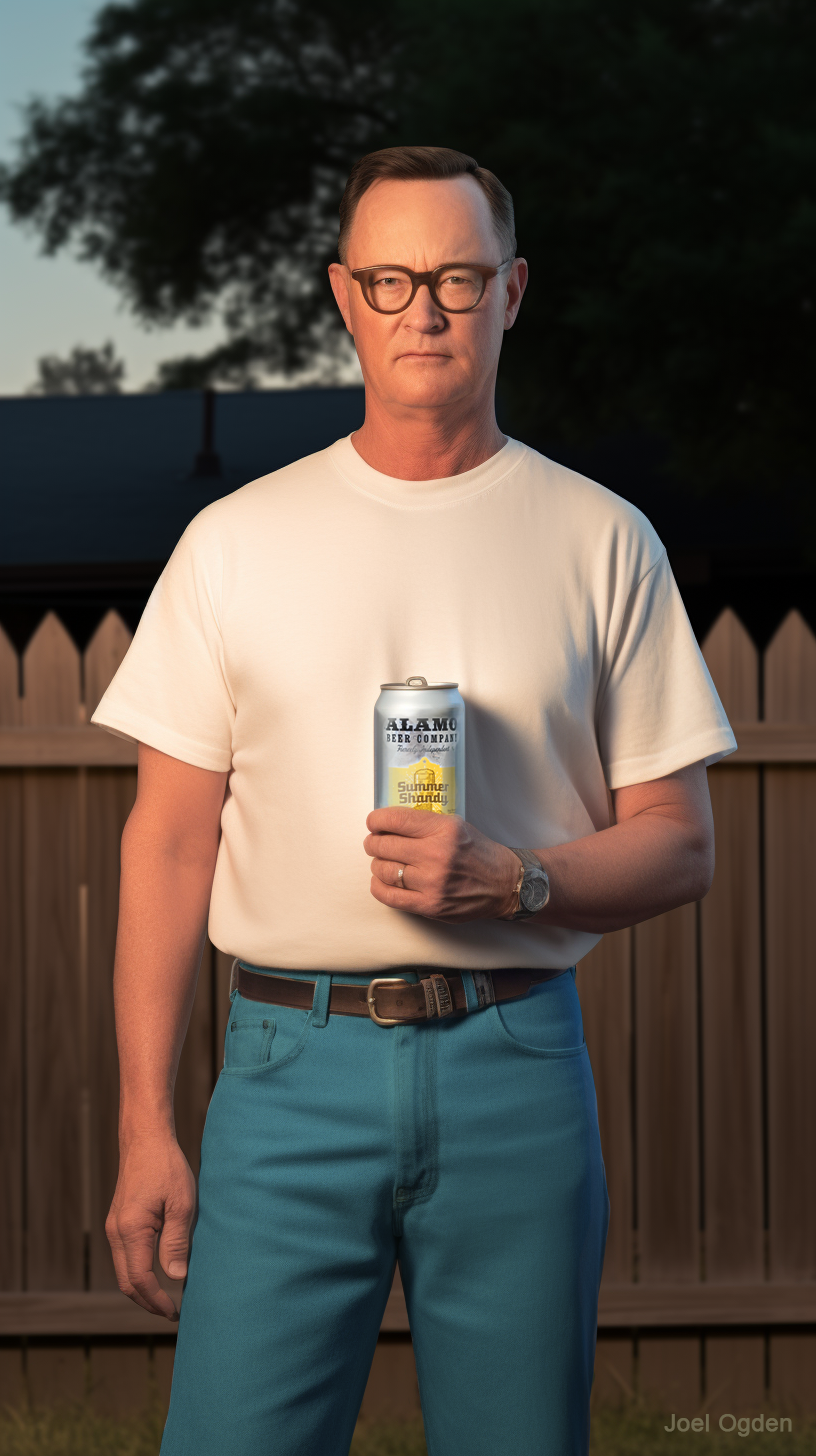

King of the HIll (2023)

Midjourney, ChatGPT, Photoshop Generative Fill

A personal project taking on the challenge of creating a life-like human character from cartoon source material. The initial prompts are generated in ChatGPT and fed into Midjourney along with photo references of the cartoon character, cosplay costumes impersonating the character, and photos of actors I feel would play the role well. After many iterations of choosing propagating images with the desired traits, I generate a new intermediate images as the reference for the next iteration. The images were then brought into Photoshop for additional cleanup and color correction, using the photoshop generative fill to fix any issues from midjourney, i.e. too many fingers, facial deformities, text and logos.

Midjourney, ChatGPT, Photoshop Generative Fill

A personal project taking on the challenge of creating a life-like human character from cartoon source material. The initial prompts are generated in ChatGPT and fed into Midjourney along with photo references of the cartoon character, cosplay costumes impersonating the character, and photos of actors I feel would play the role well. After many iterations of choosing propagating images with the desired traits, I generate a new intermediate images as the reference for the next iteration. The images were then brought into Photoshop for additional cleanup and color correction, using the photoshop generative fill to fix any issues from midjourney, i.e. too many fingers, facial deformities, text and logos.

Prompt Example for Hank:

--ar 9:16 (photo link) Bright, sunny Texas day. Standing in front of a tall, brown wood picket fence. Ultra high resolution, real life photograph of real person. White plain tee shirt tucked into blue jeans. Holding a can of beer. Hank Hill played by Tom Hanks. Portrait photography. Award winning photograph. 5 --s 50 --v 5.1 --style raw

--ar 9:16 (photo link) Bright, sunny Texas day. Standing in front of a tall, brown wood picket fence. Ultra high resolution, real life photograph of real person. White plain tee shirt tucked into blue jeans. Holding a can of beer. Hank Hill played by Tom Hanks. Portrait photography. Award winning photograph. 5 --s 50 --v 5.1 --style raw

Rick and Morty (2022)

Midjourney, ChatGPT

My first attempt at a live action conversion of a cartoon, based on the popular cartoon Rick and Morty. The expression of the characters was key to conveying their character due to the simplicity of the source material. Rick needed to give off a sense of intelligence, but frustration, with a potential for insane outbursts. Morty needed to be terrified of Rick and his insane interstellar antics. His wide eyed expression was achieved by training in stills from the cartoon and other dramatic expressions from comic strips and illustrations.

Midjourney, ChatGPT

My first attempt at a live action conversion of a cartoon, based on the popular cartoon Rick and Morty. The expression of the characters was key to conveying their character due to the simplicity of the source material. Rick needed to give off a sense of intelligence, but frustration, with a potential for insane outbursts. Morty needed to be terrified of Rick and his insane interstellar antics. His wide eyed expression was achieved by training in stills from the cartoon and other dramatic expressions from comic strips and illustrations.

Video to Video

Digital Human Test (2023)

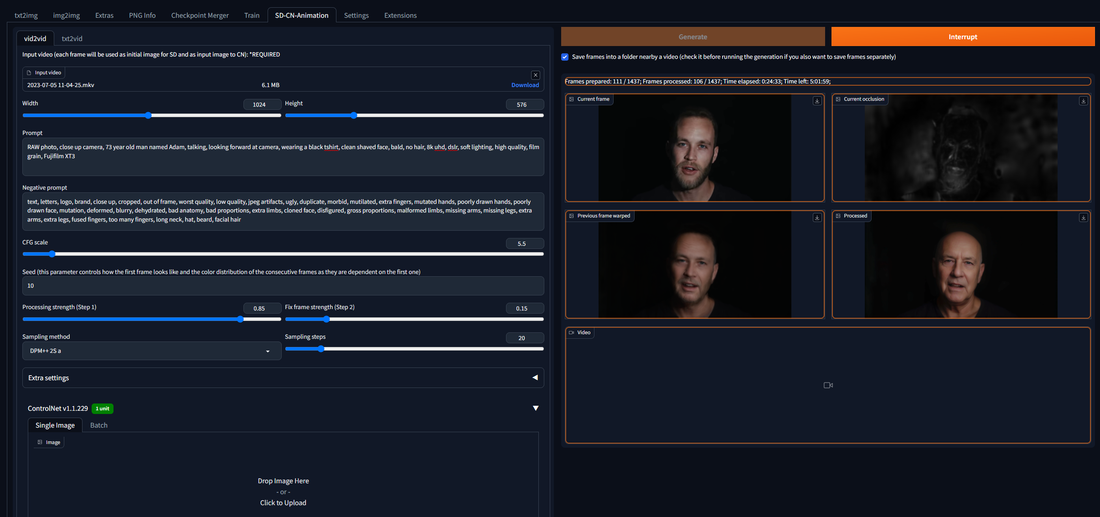

Stable Diffusion, Controlnet Openpose, SD-CN Animation, and Automatic1111

One of my early tests in AI video production. I used a live action video as a base, then generated an AI character that would coordinate to the reference. A focus was put on facial animation. Future testing will be done to try and resolve the flickering issues. The video was produced with the SD CN Animation extension in Automatic1111, using the RealisticVision model and Control Net Open Pose preprocessor.

Stable Diffusion, Controlnet Openpose, SD-CN Animation, and Automatic1111

One of my early tests in AI video production. I used a live action video as a base, then generated an AI character that would coordinate to the reference. A focus was put on facial animation. Future testing will be done to try and resolve the flickering issues. The video was produced with the SD CN Animation extension in Automatic1111, using the RealisticVision model and Control Net Open Pose preprocessor.